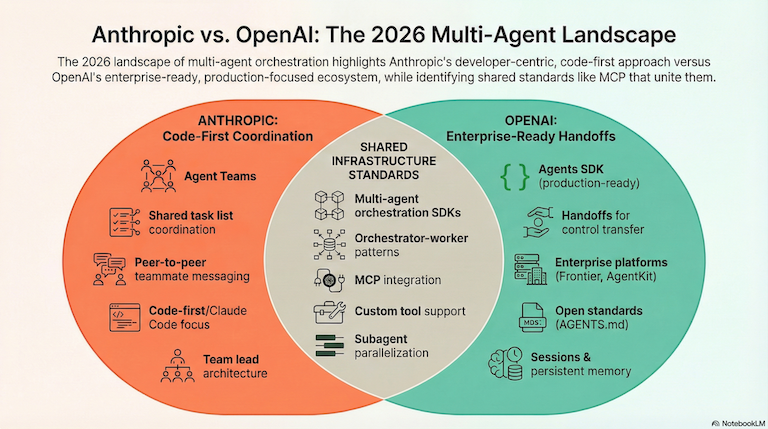

Anthropic and OpenAI Agent Orchestration: Where the Giants Stand in 2026

Multi-agent tooling from Anthropic and OpenAI, current capabilities, and what it means for developers building with AI agents.

Multi-agent orchestration is coming much sooner than most of us expected. Gartner reports that multi-agent systems (MAS) inquiries surged in 2025, and by the end of 2026 predict that 40% of enterprise applications will include task-specific AI agents.

Both Anthropic and OpenAI have responded with official orchestration tooling. Here’s where each company stands, what they’ve shipped, and what it means for developers building multi-agent systems.

Anthropic’s Approach: Agent Teams and the Claude Agent SDK

Anthropic has taken a code-first approach to multi-agent coordination, boldly building orchestration directly into Claude Code’s growing set of capabilities.

Possibly related; the recent Claude Code OpenClaw usage bans might be a sign of Anthropics intention to resist interoperability between model providers and developer-led innovation in this space. We hope not!

Agent Teams (Teammates, Claude Code)

Currently in opt-in only experimental state (as of 22 Feb 2026).

Agent Teams launched alongside Opus 4.6 as an experimental feature. For Claude Code users that wan’t to try it, you need to enable the experimental feature with:

{

"env": {

"CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS": "1"

}

}The architecture follows an orchestrator-worker pattern:

- Team lead: The main Claude Code session that coordinates work and synthesizes results

- Teammates: Separate Claude Code instances, each with its own context window

- Shared task list: Teammates claim and complete tasks independently

- Peer-to-peer messaging: Teammates communicate directly, not just through the lead

| Feature | Capability |

|---|---|

| Context | Each teammate has independent context |

| Communication | Direct messaging between teammates |

| Coordination | Shared task list with self-claiming |

| Best for | Research, parallel feature development, debugging |

This differs from simple subagents. Teammates aren’t just reporting results back. They share findings, challenge each other’s conclusions, and coordinate work autonomously.

Use cases flagged by Anthropic:

- Research and review (parallel investigation)

- New modules or features (teammates own separate pieces of work)

- Debugging with competing ideas

- Cross-layer coordination (frontend, backend, tests)

Claude Agent SDK

The Claude Agent SDK (renamed from Claude Code SDK) enables programmatic agent building. Core capabilities include:

- Context gathering: Agentic file search, semantic retrieval, subagents for parallelization

- Action taking: Custom tools, bash execution, MCP integrations

- Verification: Rules-based feedback, visual verification, LLM-as-judge

Multi-Agent Research System

Anthropic’s internal research system demonstrates the potential. In their engineering blog, they share that a multi-agent system with Claude Opus 4 as the lead and Claude Sonnet 4 subagents outperformed single-agent Claude Opus 4 by 90.2% on their research evaluation.

Token efficiency matters. A lot. Anthropic found that “token usage by itself explains 80% of the variance” in performance.

OpenAI’s Approach: Agents SDK and Enterprise Platforms

OpenAI has moved from experimental frameworks to production-ready infrastructure.

From Swarm to Agents SDK

OpenAI Swarm was an educational framework released to explore lightweight multi-agent orchestration. It introduced two core abstractions:

- Agents: Entities with instructions and tools

- Handoffs: Mechanism for agents to transfer control

In 2026, Swarm is best treated as a reference design. The OpenAI Agents SDK is the supported production path.

Agents SDK Core Primitives

The SDK operates on three fundamentals:

- Agents: LLMs configured with instructions, tools, guardrails, and handoffs

- Handoffs: Specialized tool calls enabling agent-to-agent control transfer

- Guardrails: Configurable safety validation for inputs and outputs

Additional capabilities:

- Sessions: Persistent memory management across runs

- Tracing: Built-in debugging and workflow visualization

- Function tools: Automatic schema generation from Python functions

- MCP integration: Works with Model Context Protocol servers

Multi-Agent Patterns

The SDK supports two primary orchestration patterns:

| Pattern | How It Works | Best For |

|---|---|---|

| Manager (agents as tools) | Central orchestrator invokes sub-agents and retains control | Maintaining global task view |

| Handoffs | Peer agents transfer control to specialists | Open-ended, conversational workflows |

# Example: Agent as tool pattern

from openai_agents import Agent, tool

@tool

def research_agent(query: str) -> str:

"""Specialized research sub-agent"""

# Sub-agent handles specific research tasks

pass

main_agent = Agent(

instructions="Orchestrate research tasks",

tools=[research_agent]

)Enterprise Direction

OpenAI has doubled down on enterprise agent infrastructure:

- AgentKit (DevDay 2025): Complete toolkit for building, deploying, and optimizing agents

- OpenAI Frontier (2026): Enterprise platform for agent execution, evaluation, and governance

- Responses API: Agent-native API replacing the Assistants API (sunset mid-2026)

- Agentic AI Foundation: Partnership with Linux Foundation for open standards

The AGENTS.md specification has been adopted by 60,000+ open-source projects, including major tools like Cursor, Devin, and GitHub Copilot.

Side-by-Side Comparison

| Aspect | Anthropic | OpenAI |

|---|---|---|

| Primary tool | Claude Agent SDK + Agent Teams | Agents SDK |

| Coordination model | Shared task list, peer messaging | Handoffs, agents-as-tools |

| Target use case | Coding agents (Claude Code) | General-purpose agents |

| Multi-agent communication | Teammates message directly | Handoffs transfer control |

| Production status | Agent Teams experimental | Agents SDK production-ready |

| Enterprise platform | - | Frontier, AgentKit |

| Open standards | - | AGENTS.md, Agentic AI Foundation |

What This Means for Developers

Both companies are solving agent-to-agent coordination within their ecosystems. But there’s a gap neither fully addresses: orchestrating multiple independent agent sessions at scale.

Agent Teams coordinates Claude Code instances, but they share a session context. The Agents SDK manages handoffs within a workflow, but assumes a unified control plane.

This is where platform-level orchestration becomes relevant. Tools like Flocker Dashboard connect individual agent frameworks, providing:

- Task-level agent management

- Permission controls upfront

- Real-time, cross-agent visibility

The native orchestration tools from Anthropic and OpenAI are foundational. They handle coordination within agent sessions. Platform orchestration handles coordination across sessions.

Looking Ahead

The agent orchestration space is moving fast. A few trends worth watching:

Standardization efforts: OpenAI’s Agentic AI Foundation and AGENTS.md adoption signal a push toward interoperability. This matters for teams running agents from multiple providers.

Enterprise tooling: Both companies are building governance, evaluation, and deployment infrastructure. Expect more focus on observability and compliance.

Research gains: Anthropic’s 90.2% improvement from multi-agent research systems suggests we’re early in understanding optimal coordination patterns.

Token efficiency: Both Anthropic and OpenAI emphasize that multi-agent isn’t always better. The overhead of coordination can outweigh benefits for simpler tasks.

The right question isn’t “which orchestration framework is better?” It’s “what agent is best for this particular task?”

For many developers, the answer will be multiple layers: native SDK coordination for agent provider optimisation (Anthropic, OpenAI) and agent orchestration for observability and task operations.

Sources: